The Intelligence Map: Finding Hidden Connections

In the world of high-stakes espionage, knowing who a person is is less important than knowing who they are like. To see the truth, you must map the network.

The Scenario

Imagine you are a Lead Profiler at a secret agency. You have a wall covered in thousands of agent files. In the previous chapter (Encoding), you translated every file into a set of numbers.

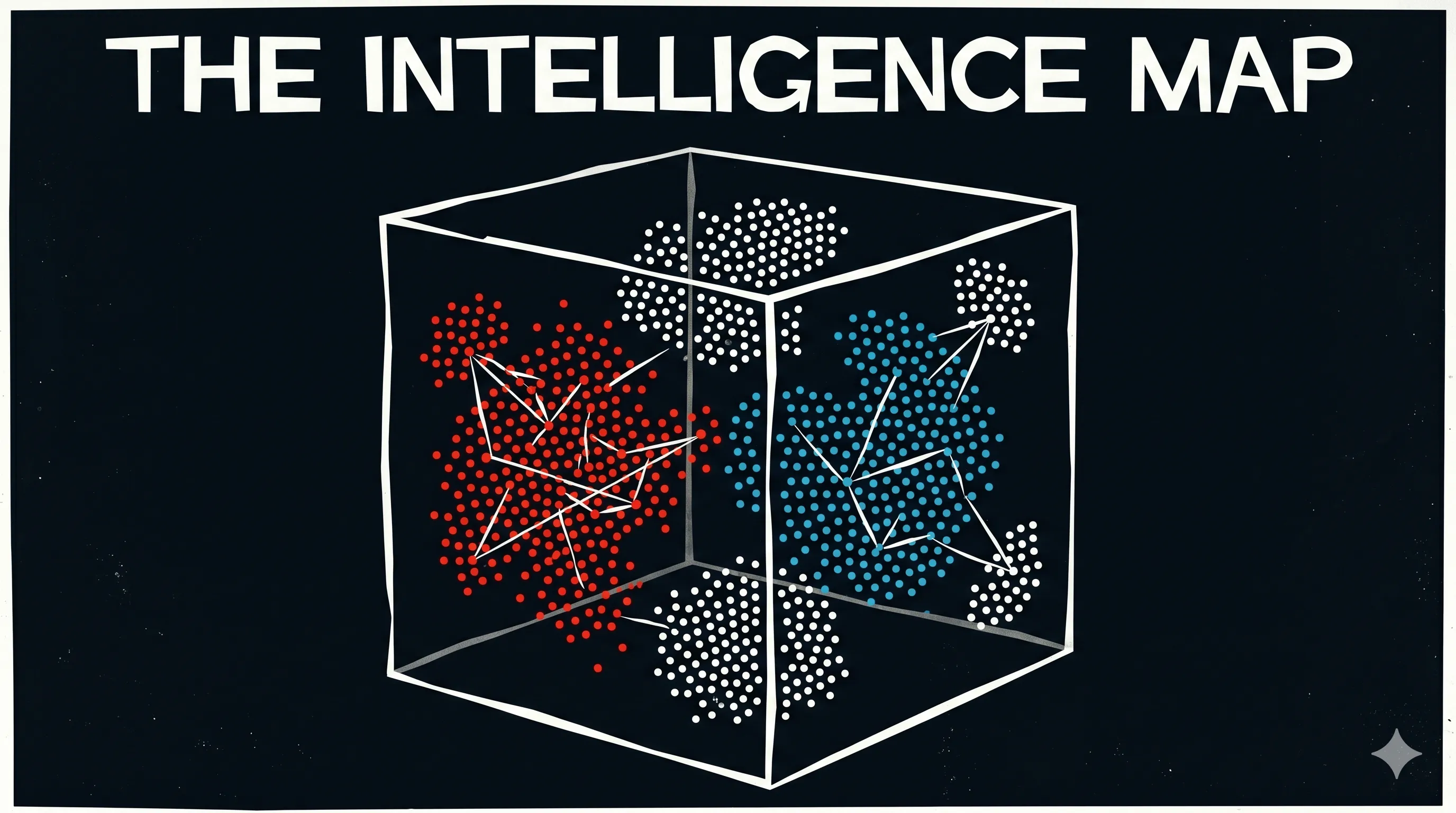

Now, you feed those numbers into a specialized 3D Projector. Suddenly, the files aren’t just sitting in drawers—they are points of light floating in a massive digital room (The Vector Space).

You notice something incredible: files that belong to double agents have drifted into one corner. Field operatives from London are clustered in another. High-ranking diplomats are grouped near the ceiling. By looking at where a point “lives” in this room, you can tell everything about it. This multidimensional map is what we call EMBEDDINGS.

The Reality

Encoding gives a word or an image a number, but EMBEDDINGS give it a location.

In an embedding space, “King” and “Queen” are physically close to each other. “Apple” and “Orange” are close to each other, but far from “Submarine.” This allows the AI to understand relationships. It doesn’t just see a word; it sees the “neighborhood” that word lives in. This is why AI can understand that a “Happy customer” and a “Satisfied buyer” mean roughly the same thing—their locations in the embedding map are nearly identical. (We’ve already seen how this works in practice with Vector Databases).

The Why

Embeddings are the secret sauce of modern AI. They allow machines to handle nuances, synonyms, and context. Instead of looking for exact matches (which is what old computers did), modern AI looks for “neighbors.” This makes the system flexible, intuitive, and—most importantly—capable of seeing connections that humans might miss.

The Takeaway

Embeddings turn meaning into distance. If two things are similar, they live in the same neighborhood on the AI’s map.

AI specialists call it: Word Embeddings / Vector Embeddings Embeddings are a type of representation where individual items (words, images, or users) are mapped to vectors of real numbers in a continuous vector space. The key property is that semantically similar items are mapped to nearby points in the space.

💬 If you had to map your friends in a room, who would be standing closest to each other?

Part 14 (Embeddings) of 25 | #DeepLearningForHumans