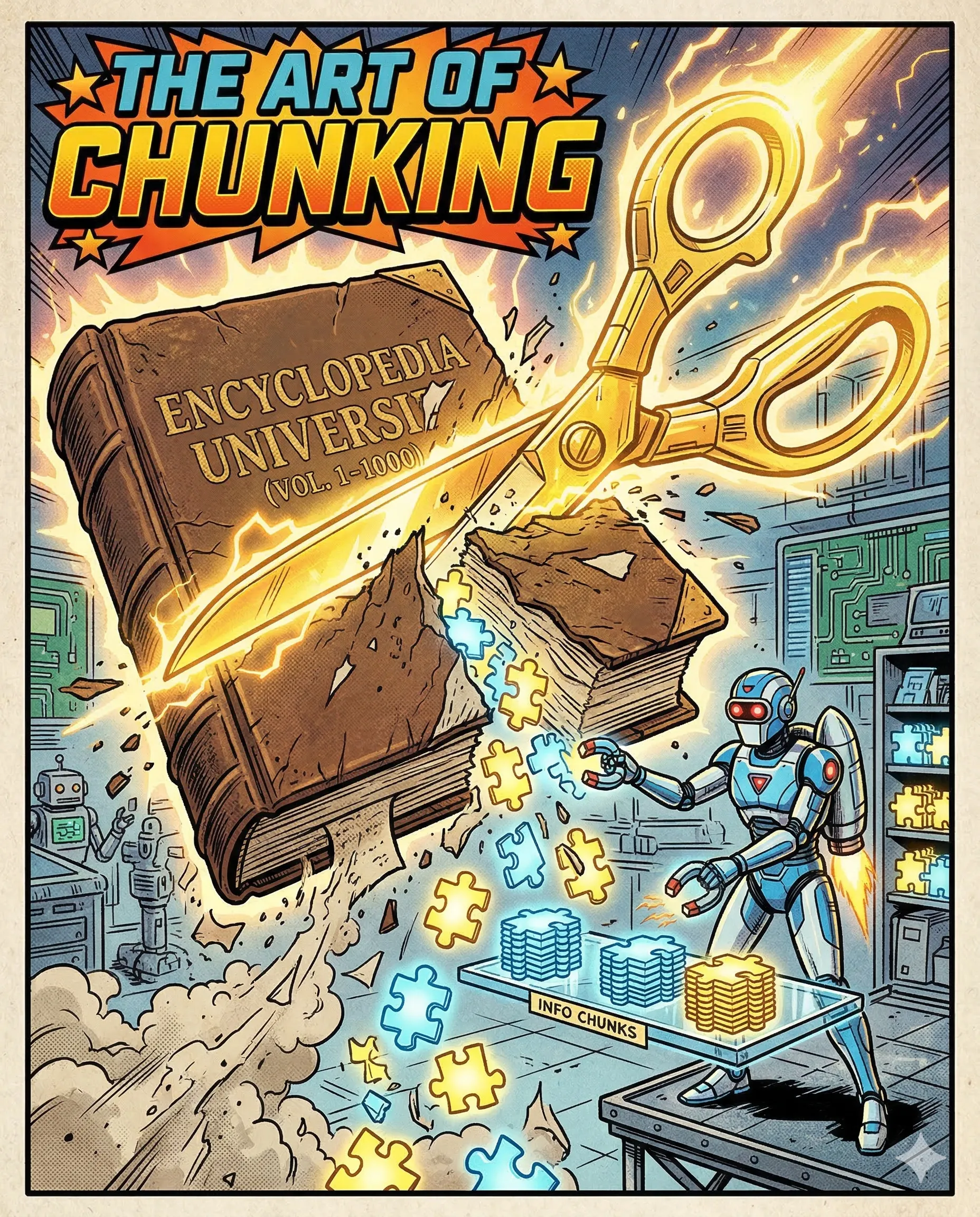

AI's Secret Weapon: Really Good Scissors

Imagine you are trying to swallow an entire watermelon in one bite. You have the hunger, but your mouth has a physical limit. To enjoy the fruit, you must slice it into small, manageable cubes.

This is exactly how AI handles massive amounts of information. Every AI model has a “context window,” which is essentially its short-term memory. It can only process a certain amount of text at once. If you throw a 500-page book at the AI, it becomes overwhelmed and starts losing data.

To solve this, we use the “Secret Weapon” of RAG: Chunking.

The mechanism is simple: we take a giant document and use digital scissors to cut it into small, neat pieces called “chunks.” Each chunk typically contains a few paragraphs. We then store these pieces separately in the library. When you ask a question, the system only brings the most relevant chunks to the AI’s attention.

In practice, this ensures incredible speed and accuracy. For example, if you are looking for the “cleaning instructions” in a massive 500-page manual for vegan leather shoes, the system finds only the two specific paragraphs you need. It ignores the other 498 pages. This allows the AI to stay focused and provide a perfect answer without getting lost in the noise.

Success happens when you stop seeing a document as one giant object and start seeing it as a collection of answers. You transition from “searching a book” to “finding the exact puzzle piece.”

The Takeaway: a smart AI reads the whole book, but an efficient AI only reads the relevant chunks.

Why This Matters for Your AI Product

Chunking strategy is often the “make or break” factor in RAG performance. When building a production-ready system:

- Balance is Key: If chunks are too small, they lose context. If they are too large, they waste the context window and increase noise.

- Overlapping: We often include a bit of the previous chunk in the current one (overlap) to ensure ideas don’t get cut in half mid-sentence.

- Metadata: By tagging chunks with page numbers or section titles, you can provide citations, allowing users to verify exactly where the AI found its information.

AI specialists call it: Chunking The process of breaking down large documents into smaller, logical segments to improve the performance and accuracy of AI retrieval.

If you had to summarize your favorite long book, which three “chunks” would you pick first?

Part 7 of 18 | #RAGforHumans